|

Context: In this post, I reflect on a recent OpenCourse I have been completing. It centres on digital capacity and one I am so glad I engaged with. No matter what level you feel you are at with digital capacity, this is a very worthwhile course. Specifically, I wanted to use this blog post to reflect and detail what I decided to focus on (programme focused feedback opportunities), and show the 'story' of the process I followed. Very worthwhile overall, and a wonderfully supportive experience.

The National Forum OpenCourse

The work itself, and my platform for it, fits well with several of the areas of the DigCompEdu framework. Mapping our experience to this framework was one of the first activities, and one I would recommend people consider. It can really help to further justify the work you are doing, highlight areas you need to develop more with and in the vast majority of cases, realise how well you are already doing in this space.

My Feedback 'addiction' & rationale for this work

Essentially, you will see the reflection linked above brought me to the benefits of collating feedback in an actionable way being needed, supporting self-reflection and engagement with actioning of the feedback. I mentioned the University of Surrey's FEATS programme, and you can read more about it in the blog post's reflection section linked. FEATS has always captured my attention.

Hence, I wanted to explore the 'MyFeedback' plugin on Moodle to see how it functions, but to all investigate it from the student point of view. Taking steps towards a more holistic approach to digital feedback provision, collation and action are all on my radar. Could this plugin support the collation of Moodle feedback across programmes? Could students be empowered to extract the key points from feedback received, and extract them across modules so they can see the 'big picture'? Well that was my goal! To work towards achieving this goal, I felt it was firstly important to test, troubleshoot and use the platform initially, before planning pilots upon the return to the classroom. Obtaining student feedback at that point, as well as from the programme team, will also be important in order to both build awareness and identify any issues to further enhance its implementation. We are fortunate to have supportive teams in both our CELT and IT department, meaning we can have open discussions there and feed back any issues identified too. Considering Programme Focused Feedback OpportunitiesWhat I did here...

Once all the feedback had been provided, I was able to then swap back to the student role, examine how the feedback appears and to also investigate how it connects in to 'MyFeedback'.

The 'student' view

Can 'MyFeedback' actually help learners?...

Overall, it's a great plug in and one we should encourage staff/students to engage with across their programme/modules. It has the capacity for learners to be empowered to extract key points from feedback across all their Moodle assignments, helping them see the bigger picture on their strengths and areas for improvement that may be common across various modules/lecturer feedback.

There is potential here for students to have everything in one place, an aspect that came through in a recent national survey. A good example of this is when I reflected on previous audio feedback sent as an audio file by e mail to my students. One later said that on their bus commute a few weeks later, while listening to their library on shuffle, my feedback file started playing in their earphones! I'd prefer not to be 'landing' in their playlists, and having it all in the myFeedback lets them collate it, reflect on it etc. when they wish, and in the one place. Any major considerations/things to note?

From my use of the plug in on Moodle, I did come across some areas I feel are worth being aware of....not being negative here, but just building awareness of some points to note if you are getting started with it like me....

Integrating for the longer term?

When I began to consider the longer term, programme wide integration of MyFeedback, some points came to mind....

Overall, would I recommend a National Forum supported OpenCourse?

What's not to love? You get to be part of a wider group across the HE sector, with numerous opportunities to network and share. You are incredibly supported by the group of course facilitators. Complementing this, you are a member of a peer triad group, which truly is a real cornerstone for learning and motivation. I have been so inspired by my fellow triad members on this OpenCourse, with support, understanding, positivity and encouragement filling our meetings. Listening to different projects, viewpoints, experience, expertise is invaluable and the positive comments and suggestions you get from the triad team can make such a difference in your motivation and work too. Your colleagues can see things that maybe you didn't notice or consider, or perhaps they have tried and tested something similar before. All in all, it's been a wonderful journey to have made such strong connections with colleagues and leaders from other institutions.

I honestly can't really remember significantly learning from a previous submission - being honest I guess we were too focused on the score and the approximate 'grade band'. On some occasions, I may have engaged with the demonstrator to enquire about my mark and what I could do to improve it. Otherwise, it was more 'one-way traffic', a process not realising its learning potential. Traditionally, everything was summative, and that was the approach instilled across the board at that time.

Joining Higher Education & Initial Feedback goals.

When I joined the academic community in 2009, one of my early personal goals was to help my students to learn at every opportunity, and develop a feedback-centric, always-improving mindset. The potential was too immense to ignore. My own experience outlined above was a key driver for me with this goal. I approached with a focused gusto, providing copious amounts of feedback to each students, handwritten - with hours of enthusiastic time being put in. However, when I reflect now, I probably did what many do in the early stages of a teaching career, and go all in with an assumption you are doing the right thing. So what happened? Looking back, I recall hours and hours of feedback generation not fulfilling its purpose. The same mistakes were appearing week-on-week, by the same students despite my sustained and repeated efforts. Feedback uptake, and future actions from it were not working. I had to consider some sort of a complete systemic change around feedback.

I worked with students in a partnership approach to learn from their experiences. All of my research around this space were evaluated via online surveys or focus groups, to ensure I was hearing anonymous feedback. It was the only way I could improve what I was doing. With ethical approval acquired in advance, I was able to disseminate my findings at conferences and in publications.

In general, reviewing Figure 5, I have worked on individual approaches developing personalised feedback sheets for example, group approaches to generalise feedback for large groups, while starting to consider digital approaches, e.g. audio or video feedback, which I will detail and reflect on below.

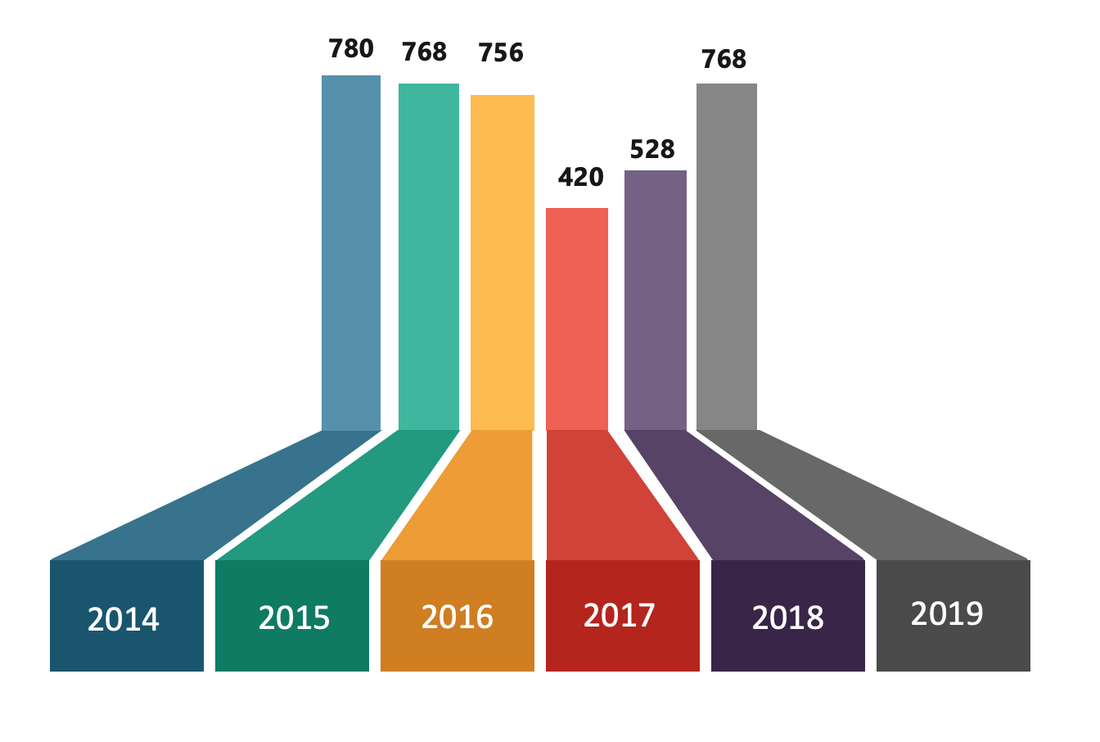

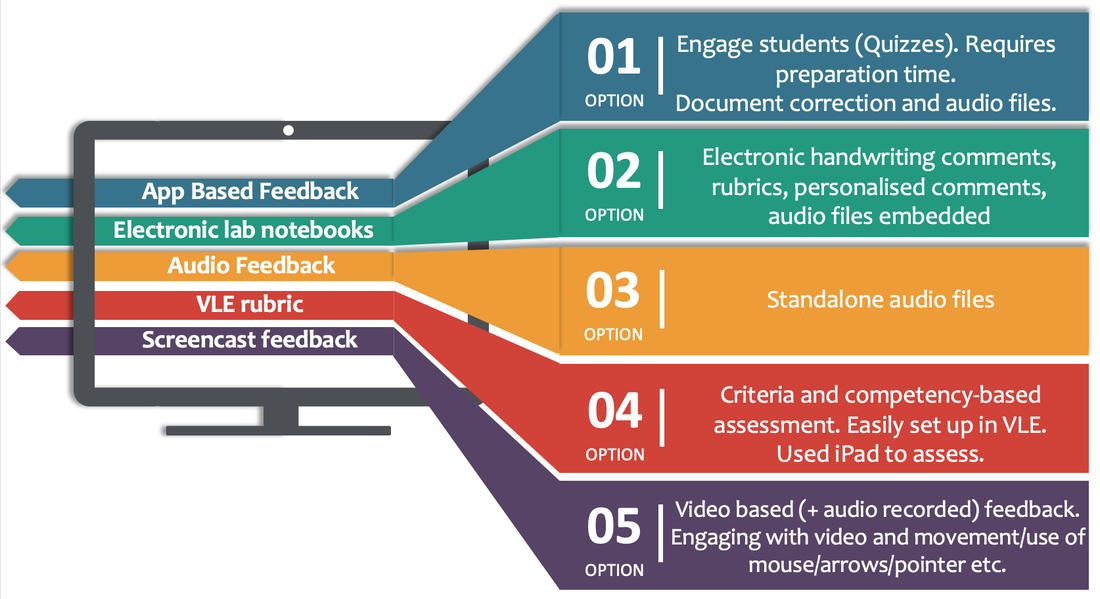

As part of a National Forum supported project (the TEAM project I was involved in) aimed to develop technology enhanced assessed methods in practicals across science and health, it is worth noting one of the project's four themes centred specifically on 'digital feedback', while some of the others had feedback elements built in to them (such as electronic lab notebooks, pre-practical quizzes combined with app-based quizzes, rubrics etc.). When we reflected on the project, we realised that while we were focusing on assessment at the beginning, all our work was engaging with student feedback provision in parallel.

My other app of choice has been 'notability'. It's primarily a note taking app that works well on the iPad with the Apple pencil. As well as annotating PDF files submitted from assignments, I started recording audio comments on the app that integrated on the annotated PDF file. It gave me an opportunity to explain and expand on my comments - something that was concerning me with written feedback, i.e. was I explaining it enough with text? The audio and video help so much in this regard. However, one issue with this can become time. Over explaining can make feedback too long, and this can mean students won't engage. Turnitin has limited its feedback to 3 minutes, a feature that ensures you explain concisely while importantly controlling both your, and the student's, workload. Vital tip there! I will expand on my digital approaches further below.

Option 2: Engaging with Microsoft OneNote, I was able to design assessments and provide students with collaborative spaces for peer learning. A key element of this software package was the opportunities it provided around feedback. For example, I could click, record and place my audio comment at the relevant part of the assignment; I could annotate the submission with comments and with the iPad and stylus, could handwrite comments also. I found this helpful to link and connect comments with relevant aspects of the submission. Equally, the software allows typed text comments also. In addition to this, I worked on integrating rubric feedback in to the OneNote reports, to support learner feedback corresponding to specific criteria. Option 3: Another element I commenced was recording standalone audio files that I could send via e mail to students. I found this helpful for group project submissions as it supported the dialogic process I had established with the groups. After sharing audio feedback with teams, I could use class time to engage and discuss it further, continuing the conversation. Option 4: Engaging with our institution's virtual learning environment (Moodle), I developed a set of rubrics around assessing a skill or competency. Here, I was able to assess while providing immediate feedback via the rubric. Using an iPad and stylus, i was able to 'check' the relevant rubric boxes on each student's virtual learning environment based rubric. Before the student left the room, they could access their rubric feedback and grade. While very beneficial, I find rubrics alone, without personalised comments, can cause a disconnect in the feedback process. Don't get me wrong, they can work very well especially if co-created with students, but I feel they are complemented and enhanced by the additional incorporation of open comments from the educator. I found taking that approach more beneficial for the learner, as the rubric may not capture aspects that could support that specific individual. Option 5: I became a big fan of screencasts relatively early in my teaching career. I like various aspects of 'tech' and was keen to engage where it supported learning. I was able to make screencasts to complement my teaching. Over the years, I got more in to the video and audio quality of these, as well as finding new platforms for sharing my content. But I often found I needed to make my videos more engaging, to help the viewer. I was successful in applying for funding to support the development of customised sketch diagrams, and this brought my feedback videos to a new level. Equally, I began to invest in various software packages which facilitated mouse tracking. These simple approaches were very effective and supported ways I was providing feedback. I was able to engage with new ways to engage my students with the feedback I was providing. As we have now entered a sphere of hybrid/hyfelx/blended/online/remote learning etc., the expertise around feedback in addition to the grounding in literature, an evidence base, around feedback, I feel I am very aware of the importance and potential of feedback and hopefully can continue to build on my work and continue to improve how I support my students.

But what is feedback without uptake and action?

At this stage I have described my chronological journey enhancing feedback for learners. However, as I have often mentioned to date, how a student engages with feedback, and develops an action from it, is equally important to consider. My incremental marking system engaged learners with the mindset of improving based on engaging with, and acting on, the feedback received. The self-assessment worksheets engaged the students with reflection on their work, meaning they are considering their work as they submit. I found this change again impacts their mindset; they seemed to care more about their submission and were looking forward to seeing what I thought of it, reading through the comments. Positioning feedback review sessions and feedback dialogue opportunities in to class time can enhance this uptake and engagement further, while asking students to mention an element of feedback on a previous submission they acted on gets them in to the mindset of recognising how feedback can support their future work. It's worth knowing that all the effort you put in to feedback needs to be complemented by processes and systems to support its uptake. Engage with your learners, speak to them and most importantly listen to what they are saying about feedback. Together, both groups can ensure your feedback makes a big impact.

In my reflection piece below, I will review and consider some elements of where this feedback journey has taken me so far.

Supporting Feedback Enhancement with System Changes

Feedback needs attention and supports in place. For example, for first year students transitioning to higher education, explaining what feedback is and what its purpose is should be considered. We then need to ensure we develop consistent approaches across programmes to embed familiarity for learners. Reflecting, I feel a key approach that advanced uptake of my feedback was the incremental marking scheme, as it propelled students to seek ways to improve each submission - with the realisation that feedback facilitates this. Does Feedback Actually Work? Reflecting on my experiences, I feel feedback on its own only truly resonates with a small proportion of a class. Feedback needs to be more of a culture than a simple process. It needs a framework of support, consistent across modules and programmes. For example, first years transitioning in to Higher Level may need feedback to be explained to them in simple terms, with opportunities to realise its role and potential for learning. Educators need to ensure feedback is more facilitator or action focused, as opposed to content focused. How educators consider their feedback is important - is what is provided supporting future learning and improvement, or is it just fixing the mistakes, grammar or spelling errors? Feedback uptake needs to be promoted, and monitored, ensuring engagement and synthesis of the learning takes place. I found building in feedback review times helpful, as well as providing a task to students to highlight an aspect of the previous feedback received which they have taken on board. It makes them reflect, think and identify one element they are taking forward to improve. If this happens across the board, they identify several improvement areas. I am proud of my incremental marking system, and the impact it had on feedback engagement and uptake. Since its inception, I stopped seeing the same mistakes being made on subsequent assignments. So yes, reflecting, I still feel feedback can work, but for a better reach of uptake and action, elements and structures around it need to be considered. Pilot Power & Partnering with Students Advising the changes and innovations I put in place were pilots and collaborations with students to inform, reflect and evaluate processes. I learned quite quickly the power and importance of designing pilots initially with small groups. It built my confidence in what I was doing, while allowing an opportunity to trial and make improvements to elements too, changes that are student-informed and enhancing their learning. Dialogic Approaches to Feedback Like many elements of teaching, didactic or one-way traffic approaches can often restrain learning potential. Similarly with feedback, I have found building in opportunities to reflect and discuss feedback helpful. We often hear of feedback loops, and the term 'closing the loop' but I do like David Carless's description of a feedback spiral (Carless, 2018), promoting the life long learning element and the role feedback can play in this. I think this takes things to another, continuous learning, level and something that really impacted my way of thinking around feedback. Does Technology Actually Help Students with Feedback? Of course with technology, it's important not to just blindly follow a new trend. Remembering pedagogies and identifying an evidence base for interventions can support the success around technology. I feel technology should support the learning, not drive it. With regard to feedback, technology does provide so many additional avenues, that can benefit learners. We can ensure that accessible approaches to feedback can be provided, for example, video and audio feedback can be viewed online, with accompanying text transcripts allowing further engagement. For international learners, translation opportunities may support further. Personally, I've found feedback with technologies has enhanced my practice, allowing me to communicate and explain with my students in a clear way. I can use subsequent class or practical time to engage in feedback reflection and dialogue. In saying that, technology does have to be truly considered by programme teams. For example, students receiving feedback by various software platforms or apps across their modules, could become overwhelmed or bombarded by well-meaning educators. I really dream of developing some sort of consistency around this, as a learner overwhelmed by excessive feedback may actually avoid it. If I had to be critical of technology for feedback, or putting it a more positive way, aspects to ensure you consider in this space, it is important to ensure feedback also remains 'personalised'. With technology, there is the potential of 'colder' matter of fact feedback, even automated feedback, without personal elaboration and guidance. For example, a VLE rubric can provide feedback based on criteria along a scale, however the text is often generic and many don't add personalised comments on top of the rubric to support future actions around improvement. Technology has the potential to succeed in this space, but you have to make it work for you and your students. Putting yourself in their shoes can really ensure the benefits are truly met. Beating the bombardment? Answer = FEATS; bringing it all together - what an amazing model! While I am proud of my achievements around feedback, I still feel I have so much to yet improve on. Digital feedback sounds fantastic for students, but many don't put themselves in the position of the student being 'bombarded' by all these various types of feedback. While meant as a support, learners may find this overwhelming, unable to synthesise and act on the feedback across modules. It has to be brought together - it has to! I attended a session on feedback in our Institution, led by Dr. Naomi Winstone and Dr. Rob Nash. As part of this, they presented the University of Surrey's FEATS platform, co-created with students working with Dr. Winstone (see Figure 8 above). Here, students are able to categorise the feedback they receive in one place, meaning they can learn the aspects of their work requiring further attention and those in which they are excelling. It provides a platform to help synthesise the feedback, and allow learners to see the gaps they need to work on and fill. A superb innovation, so student centred yet staff can also use this to support their students further and even monitor implementation of feedback category areas. So, you can see, I still have a lot to accomplish! Workload One aspect of an educator's workload that has significantly changed in recent years has been around providing feedback. Looking back, it is an area that I spend a huge amount of time on. I provide feedback on all continuous assignments, projects and laboratory exercises, activities and reports. An immense amount of effort and time. It is important for me, and my colleagues, to manage this feedback workload yet still achieve the role of feedback in enhancing our students' learning experience and associated work. Could tweaking assessment be the key, across programmes? Can we have programme feedback? A lot to ponder on. Have I it All Mastered? Can I improve? While I feel I have made a significant contribution to enhancing feedback with my students, I still feel I have a lot to learn and implement. I would like to work more on my feedback becoming more actionable, and track its implementation across future assignment submissions. Ultimately, a big issue for me is the 'bringing together' of feedback across stages, or programmes, for students. It is a big initiative to consider though, one I feel needs cross-departmental buy-in. With many academics having their own approach to feedback, it may be difficult to bring it all together. However, with the new digital approaches and increased digital confidence amongst staff, perhaps getting a programme platform for tracking feedback approaches may be more feasible now. I'm currently working with the MyFeedback Moodle plugin to further investigate its potential in this space. There is more 'digital' feedback than ever at the moment, however I still get drawn to the potential for feedback bombardment to overwhelm students. It will be so important to put the learner at the centre of everything as we navigate a post-COVID future, and while assessment will be a major discussion point, feedback has to be part of these considerations also. I do see the approach to assessment can influence feedback directly. As we enter a post-COVID world, final exams could even become a thing of the past, with a more continuous approach to assessment. However, this has to be approached with caution as over-assessment of students could become an unsustainable reality. Synoptic, integrated assessment could represent the way to move forward, and in situations like this, an approach of collating feedback in a holistic way - it might end up being the only way forward yet! Walk the Talk! Finally, I commenced this post with a reflection on personal experience, and I will end with the same. In the summer of 2018, I did an Associate Certificate in Graphic Design as a PD opportunity. Interestingly, I was specifically praised by the course instructors for how I received, listened and acted on the feedback they provided. It was highlighted on a few occasions. So as a learner, I recognise the potential of feedback - and showing how much I value it, practice what I preach!

Citations mentioned in the text:

"So this was my first experience of a proctored exam - and I was the one being examined!"

So firstly, what does 'proctored' actually mean? It refers to the remote, online equivalent of someone neutral (the proctor) invigilating or supervising a test or exam. The role of the proctor is often defined as ensuring the identity of the test taker and checking the integrity of the test taking environment. It has become quite popular in certain countries whereby online exam assessment had started to be ever-present. It draws debate from many stakeholders, with views spanning across a broad spectrum from maintaining integrity of exams being mentioned by one side to intrusion on privacy and inducing anxiety on the other. Here, I thought I would outline the experience gained from being a student on a course which employed online proctoring for the final exam.

Preparation for the exam

In order to ensure all was in working order, 72-hours in advance of the exam, I was advised to download and install particular software on my laptop before taking an exam 'tutorial'. As part of the software installation process, my camera, microphone and screen were tested. All good, plenty green ticks! The automated step-by-step tutorial helped become familiar with the software for the exam (flagging questions for follow-up, moving to next questions, submitting etc.). Once I then confirmed the date/time/exam I was taking, my focus for the coming days shifted to final preparations and review of course notes. The day of exam. On the day of the exam, I already had a lot of good fortune on my side. I had a quiet house, with the kids in school. Thankfully, our broadband is able to handle both of us working online at the same time. I mention these, as I know not everyone would have these options - it is important to realise this. I can't imagine doing an exam like this, or any exam for that matter, in a noisy environment or one where broadband can suddenly become unstable, or where you even have some added anxiety about this possibly happening. The platform advised the system would be open for access 15 minutes ahead of the exam time. After logging in, my laptop functionality (microphone, speaker, screen) was checked once more - more green ticks after a slight hiccup with it saying my microphone wasn't working - but after speaking slightly louder, another green tick. It's worth mentioning that the software 'takes over' your full screen, preventing the viewing of any other software (your screen is also recorded as you perform the exam). At this point, I had the option to 'connect to your supervisor'. A message popped up that I had a wait time of 15 minutes - it reminded me of those countdown timers on ticketmaster.ie! The suddenly, I was connected. The supervisor introduced themselves (I couldn't see them, just hear them), and I was asked to verify my identity - note - have your drivers license/passport closer to hand than I did! After a dash to find a suitable form of ID, I was then given a few tasks:

At this stage, I was reminded it was a no material/notes exam, no phones allowed and also to perhaps get a glass of water. The supervisor told me they would be recording the exam/screen/audio/camera and that a chat option was present throughout should I require any assistance. Finally, I was wished the best of luck and reminded the Institution who set the exam take any form of infringement very seriously - a lovely note to end on :-) Then the exam commenced.... The exam experience Ultimately, time flew by. I was conscious throughout that my 'supervisor' was present in the background watching/recording/listening - as I had the bright green light of my laptop's camera light permanently on above my screen, but personally I didn't find it too off putting. I was more focused on the questions and the countdown timer in the platform which I was monitoring throughout. You do get an automated time reminder with 30, and 5, minutes remaining. The option to flag certain questions was helpful, allowing me to know which ones to revisit at a later stage. At the end of the exam, the system auto-submits your answers - but you can submit sooner should you wish. You receive your overall exam outcome immediately (thankfully, I made it through), but no feedback on the questions you may have missed. That was something I would have liked to see. When it ends, it ends. No discussion with the supervisor, no walking out with colleagues to have a debrief or conversation about the exam. I realise now how much help that can be sometimes, to share experiences and support each other. Proctoring for all?

Does it help the exam process?

It was mentioned during the course that proctoring was in place to protect the 'integrity of the exam'. This appears to be a common term from some of the reading online I did recently around the topic. Other articles I came across state how it stops any cheating, but many of those exact same articles go on to describe how the system can be compromised, and how companies need to keep evolving their approaches to verification and examination. The initial introduction and official document verification did confirm I was indeed....me! Did it feel invasive? Slightly in all honesty, very strange doing a 360 tour of my home office initially. Was the room tidy? - of course! My desk was spotless, apart from my laptop and a glass of water. I had spent some time earlier moving notes/folders etc. out of the room, and making sure all was even tidier than normal! Also, I'm not a big fan of installing software on my personal laptop that someone else insists I do, and one I can't validate/review etc. But I guess it's an important part of the proctoring process adopted by the institution. **I should point out the Institution mentioned they can facilitate people taking the exam at their premises also. I'm unsure if this implies in-personal invigilation, or a way for people with poor broadband to perform the exam with the online proctoring platform there. Was there pressure? Yes, time was ticking all the time! In comparison to other exams I have done, this was all about interpreting MCQs based on various scenarios - so was very different than writing content. You knew the content, but it was more based on applying that knowledge and understanding based on how you interpret certain situations. You aren't informed if you got one right/wrong during, or at the end of, the exam, so you proceed in (and with!) good faith! I felt anxious at times, even when I was waiting for it to begin, but that can be the case with any exam. Would proctoring suit everyone? No. I don't this is an approach that would suit everyone. Firstly, considering your personal exam environment and as I mentioned at the onset, good broadband and a quiet, uninterrupted space is not always guaranteed for many. Re. accessibility, I read a thread on twitter around that time from Dara Ryder, CEO at AHEAD.ie that really resonated with me. I link the thread here and encourage you to read it - from considering the points made throughout, you may think differently about proctoring. Alternatives Is it an efficient process? Yes, I guess so from an examiner point of view. Once banks of questions are set up in the system, an exam can be carried out and corrected in real time, all supervised by someone neutral to the process. Does the assessment format reach its learning potential? No - not really. Without confirmation of what was correct, and incorrect, with time to reflect on the correct scenario, further learning is definitely prevented. It's a pity this is the case, but perhaps it is due to keeping the exam questions 'secure'. Even though the exam was earlier today, I feel like you do after a good stand-up comedy show - I used to leave a Tommy Tiernan gig having laughed for hours at his numerous, and humorous, stories (and side-stories), yet unable to remember most of them when I got back home. Likewise today, you are bombarded by so many scenarios to consider, each with long text answers that occupy your mind, and prevent you from remembering them! What did I learn? Going through this process caused me to empathise with students who are being asked to take this approach all the time, especially those who may be feeling anxious and intruded on by proctoring. For many learners, it has the potential to increase the pressure, of an already high-pressure situation. Certain personality types may be quite comfortable with this approach, but I imagine it may not be considered 'comfortable' for the majority. Educators have to continue reflecting, partnering with students, and learning from current experiences around assessment, in order to navigate how remote examinations will take place as we potentially move forward to a hybrid learning world. See some recent updates at the foot of this post. How could you avoid proctoring?

'Assessment design', 'alternative assessment, 'reimagining assessment' are terms at the forefront of Higher Education in a COVID/post-COVID world. How can educators redesign their approach to assessment to ensure it remains authentic. Can student partnerships support this redesign? Can open book exams, with non-google-able questions be designed? Can we include reflections, and justifications for certain answers selected?

In addition to teaching in a digital world, assessment is really under the microscope right now, and it will be an interesting area in the coming years to reflect on and see how far we have come. In recent years, the assessment of/for/as has brought on the field in leaps and bounds. It will be fascinating to see how this evolves further over time. While Institutional Teaching & Learning departments provide great resources around alternative assessments, another good place to start for ideas is Sally Brown and Kay Sambell's post for further examples. However, there are pre-pandemic reports elsewhere noting that while real world authentic assessments can be beneficial (e.g Sotiriadou et al., 2019), they may not necessarily solve all associated issues (Ellis et al., 2019). However, it's worth noting that since lockdowns commenced in March 2020 due to the pandemic, educators have become much more skilled and creative around authentic assessment design. It will be interesting to follow the literature and scholarship as we move forward now.

FYI - JISC produced a report last year that presents five principles and targets for assessment in a 2025 digital world (Authentic, Accessible, Appropriately automated, Continuous, Secure). I link the reporthere.

FYI - An interesting article can be found here on The Verge's website around proctoring/learner anxiety. Update 1...another viewpoint [APRIL 2021]

At the recent OERxDomains2021 online conference, a presenter (Emily Carlisle-Johnston from UWO in Canada) mentioned an interesting scenario - one certainly worth being aware of. She described a recent scenario whereby educators were attending a training session around implementing remote proctoring in their institution. However, once they realised the amount of work and digital aptitude required to set it up in the first instance, combined with learning it would be them having to view entire videos of any examinations flagged by the AI/remote system as requiring attention, many ended up pursuing other assessment strategies! Certainly another insight worth being aware of, and that it is not simply the 'plug and play' approach many believe it to be.

Update 2...another great overview [OCTOBER 2021]

|

Ronan BreeEducation Developer,Science Lecturer, Archives

March 2023

Categories

All

Any opinions expressed here represent my own and not those of my employer.

|

RSS Feed

RSS Feed